LearningPulse

I redesigned the educator flow from document upload to review, helping compress a multi-day manual process into a faster, structured analysis experience while translating unreliable AI outputs into trustworthy UI states.

Role: UX Developer / Product Designer

Timeline: 7 months

Industry: GenAI EdTech

Focus: 0→1 workflow, AI UX, production

6

New NJ District Pilot Signups

From improved website positioning

3

Conference Invitations

NJ + Alaska education conferences

84.6%

Demo Completion Rate

11 of 13 completed full demo flow

The Problem

Educators need AI-generated insights they can actually trust

LearningPulse needed a product experience that could absorb technical variability without making teachers feel lost.

1. Messy inputs

Student documents varied in format, readability, completeness, and quality.

2. Variable AI outputs

LLM- and NLP-based systems could return partial, inconsistent, or low-signal results.

3. Trust gap

Teachers needed to understand what happened, what it meant, & what to do next.

DISCOVERY

What Users Needed Most

5 School Admins / Leaders

3 Teachers

Focus: Qualitative review & workflow pain points

INSIGHT 01

Time pressure was the biggest

pain point

Users did not have enough time to manually

review every artifact in depth.

INSIGHT 02

Value depended on instructional usefulness

Insights had to support real decisions, not just

summarize text.

INSIGHT 03

Trust mattered as much as

speed

Users needed to understand where insights

came from and what to do next.

Designing for the Educator Workflow

The primary personas, school administrators and teachers, needed a system that felt like an extension of their pedagogical expertise, not a black-box replacement. This required balancing technical AI capabilities with familiar, credible UX patterns.

Workflow Transformation

Reframing a Multi-Day Workflow into Minutes

One of the biggest opportunities was workflow compression.

BEFORE

Manual Review Workflow

1

Gather student documents

Collecting physical or scattered digital files from multiple sources.

2

Read each sample individually

Time-consuming manual review of every single submission.

3

Compare patterns across students

Attempting to hold cross-student insights in memory or

scratchpads.

4

Manually synthesize strengths & needs

Drafting summaries based on fragmented notes.

5

Identify next instructional steps

Finally arriving at actionable decisions after heavy cognitive load.

Often takes days

AFTER

LearningPulse Workflow

Upload student work & criteria

Bulk upload digital files or scans into a single unified workspace.

Run AI-assisted analysis

System automatically processes and extracts key themes across the entire cohort.

Review structured summaries & patterns

Clear, scannable dashboards highlighting common strengths and specific needs.

Drill into evidence when needed

Click any insight to see the exact student quotes or work samples backing it up.

Move to instructional decision-making faster

Spend energy on planning interventions rather than organizing data.

Can take minutes

PRODUCT OVERVIEW

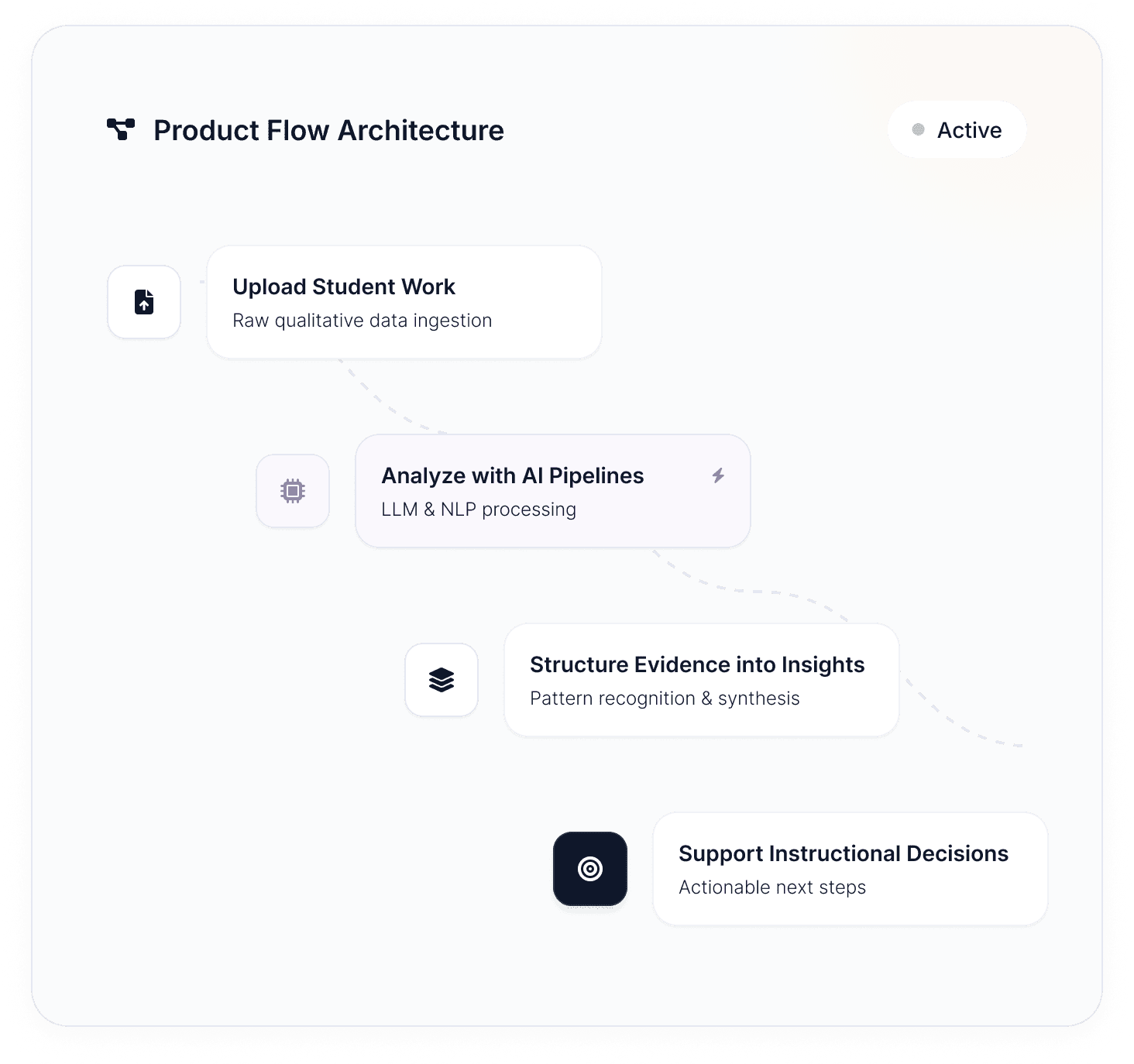

LearningPulse is a GenAI-assisted EdTech product that helps educators review qualitative student work, such as writing samples and documents, and turn that material into structured instructional insight.

The platform supports reflection, pattern recognition, and instructional decision-making by helping teachers move from raw student work to organized evidence and next-step insight.

Responsibilities

My Role

I worked at the boundary of product design and implementation in a 0→1

environment. My role was not limited to mockups or handoff — I helped define the

workflow, shape how the system behaved, and ship production improvements.

Workflow Design

Defined upload, configure, analysis, and review flows for educators.

AI UX Translation

Turned LLM, Bedrock, and

spaCy system behavior into

usable UI states.

Product Positioning

Improved website and demo

clarity for prospective pilot

districts.

Implementation

Wrote and shipped production

code in Vue, TypeScript, Python, and SQL.

Context

Constraints That Shaped the Work

Designing a 0→1 AI product required navigating technical realities, unstructured data, and the need to establish trust rapidly with new users.

01

Early-stage product velocity

Most screens were implemented directly in code.

02

Unstructured inputs

Educator-uploaded materials varied in format & completeness.

03

Variable AI outputs

The UI needed stable rendering rules for inconsistent output structures.

04

First-run clarity

New users needed to know exactly what to do next.

05

Edge-case resilience

The product had to preserve trust even when results were incomplete.

Designing for System Logic

Addressing these constraints required mapping UI states directly to backend capabilities. Every design decision was grounded in the reality of what the AI models could reliably produce.

State Mapping

Defined clear visual language for loading, partial success, and error states.

Component Architecture

Built modular Vue components that could handle varying data payloads gracefully.

SYSTEM FLOW

Simplified Architecture

Unstructured Input

PDF, DOCX, TXT

Data Normalization

Python / spaCy

LLM Processing

Variable outputs

Stable UI Render

Vue / TypeScript

Structured Schema

JSON Mapping

Reference Implementation

One hi-fi screen (light + dark) used as reference implementation

Defining the Data → UI Contract

AI output → renderable UI

Creating a reliable contract between backend AI processing and frontend rendering that handles every scenario.

Unstructured inputs

Student work documents uploaded by educators (varied format and quality)

UI-ready insight

structure

sections[] (ordered)

section_title

summary (plain language)

evidence[] with document_id

status (complete / partial /

no_signal)

notes_for_ui (optional)

Consistent UI modules

Predictable cards/blocks in the interface so teachers can scan and drill down

What I owned

Partnered with engineering to translate LLM + spaCy outputs into clear, testable UI states and UI-ready data structures

Defined how insight sections should be structured so the front end can reliably parse and render

Clarified empty/error/partial states so educators always had an understandable next step

Why it matters

Prevents UI ambiguity when outputs change

Makes insights scannable and explainable

Enables consistent iteration without redesigning every screen

Structure in practice

How the data contract translates to UI components

Data Structure

Input

section:

title: "Writing Quality"

summary: "Strong evidence..."

status: "complete"

evidence: [doc_1, doc_3]

UI Component

Output

Writing Quality

Complete

Strong evidence...

Doc 1

Doc 3

Edge state clarity

Every possible state has a clear UI representation

Complete

All evidence found, full summary generated

Partial

Some evidence found, limited summary available

No signal

No relevant evidence detected in documents

Decision enabled

Educators can trust the interface to handle variable AI outputs gracefully, reducing

cognitive load and enabling faster pattern recognition across student work

Designing for resilience: State-Driven UI

Why This Matters:

I treated edge cases as first-class UX requirements because AI workflows rarely follow the "happy path."

The goal was that teachers always understand what happened, what it means, and what to do next.

Design Principle

Implementation Notes

State Management

Vue composables track upload status, analysis progress, and error states

Messaging Strategy

All copy emphasizes user value and next steps, never technical jargon

Component Library

Reusable EmptyState, ProgressIndicator, and

ErrorBoundary components

Testing Coverage

Outcome

By designing for all six states from the start, we reduced "what do I do next?"

confusion and built trust through transparency. Teachers could see exactly where

they were in the process and what to expect.

Information Hierarchy

From "Next Step" → Insight Review

New Analysis (Step-based flow)

Design Process Note

Most screens were designed as mid-fi system blueprints and implemented directly in code to move quickly; one hi-fi screen (light/dark) served as the visual reference.

Reusable UI Patterns

Reusable UI patterns to keep the experience consistent across screens

Key Design Decisions

Step-based Navigation

Breaking the workflow into clear steps reduces cognitive load for first-time users and makes the "next action" obvious at every stage.

Educator-Friendly Language

Avoided technical jargon and data terminology, using terms like "themes," "strengths," and "areas for growth" that resonate with teaching practice.

Progressive Disclosure

Insights are presented as scannable summaries first, with detailed evidence and supporting data available through drill-down interactions.

Consistent UI Patterns

Established reusable components and interaction patterns that work across all screens, ensuring predictability and reducing implementation time.

Market Validation: Website + Demo Narrative

Outcomes from website + demo funnel work

6

New NJ District Pilot Signups

From improved website positioning

3

Conference Invitations

NJ + Alaska education conferences

84.6%

Demo Completion Rate

11 of 13 completed full demo flow

Metrics reflect outcomes from the website + demo funnel work; phrasing should indicate I contributed to / helped drive these results.

What I Did

Owned website + demo experience iteration

Iterated on the website and demo experience in Webflow, refining the product narrative to better communicate value and use cases to prospective pilot districts.

Improved clarity of positioning and flow

Refined messaging and navigation flow so prospects could quickly understand "what LearningPulse does" and how it supports their instructional goals.

Supported pilot momentum and word-of-mouth interest

Made the demo experience easier to follow and more compelling, contributing to pilot signups and conference invitations through clearer value communication.

Before / After Messaging

Narrative direction examples (final copy varied by audience)

Before

Generic, tech-first positioning that didn't communicate clear educational value

After

"Evidence-based insights for student growth"

Outcome-focused messaging that resonates with educators' instructional goals

Impact: Clearer positioning helped prospects understand product value faster, reducing friction in the signup and demo flow.

Outcomes & Reflection

Learnings from data-to-decision products in regulated + GenAI contexts

What Worked

System-level thinking

Stable output structure → predictable UI rendering

Edge-state clarity

Improved trust in AI-assisted workflows

Reusable patterns

Sped up iteration in a startup environment

What I'd Improve Next

Product instrumentation

Lightweight tracking aligned with value signals, not click counting

First-run onboarding

Intentional landing state + quick role-based guidance

Self-serve support

Help center patterns to reduce support bottlenecks

What I Can Demo Live (Sanitized)

Interactive examples and documentation available during interview

Output Schema → Rendering

How structured output enables predictable UI states

No student content shown

Password Reset Flow Spec

Complete requirements + UI state documentation

Production-ready specs

AI Workflow State Matrix

Edge case handling for GenAI workflows

Sanitized examples only

Webflow Page Iteration

Before/after narrative structure improvements

Sanitized content versions

Approach to Portfolio Work

All examples shown are truthful representations of completed work. Metrics and outcomes reflect actual project results without embellishment. Student content and proprietary information have been sanitized or replaced with representative examples.

Verification

References available upon request

Evidence

GitHub + live demos during interview